The Component Gallery started as a hobby project: an opportunity to learn new tech and something to talk about in job interviews. It was launched with zero fanfare and apart from some ‘dogfooding’[1] by me and a couple of colleagues, it was just another side project. I didn’t expect it to find an audience, but it did. Seeing my work featured on the homepage of Smashing Magazine, being named as the 17th “Hottest Frontend-Tool” of 2021 on CSS-Tricks, and receiving lots of kind messages from strangers on the internet has given me the motivation to keep working on it.

The numbers

Data points

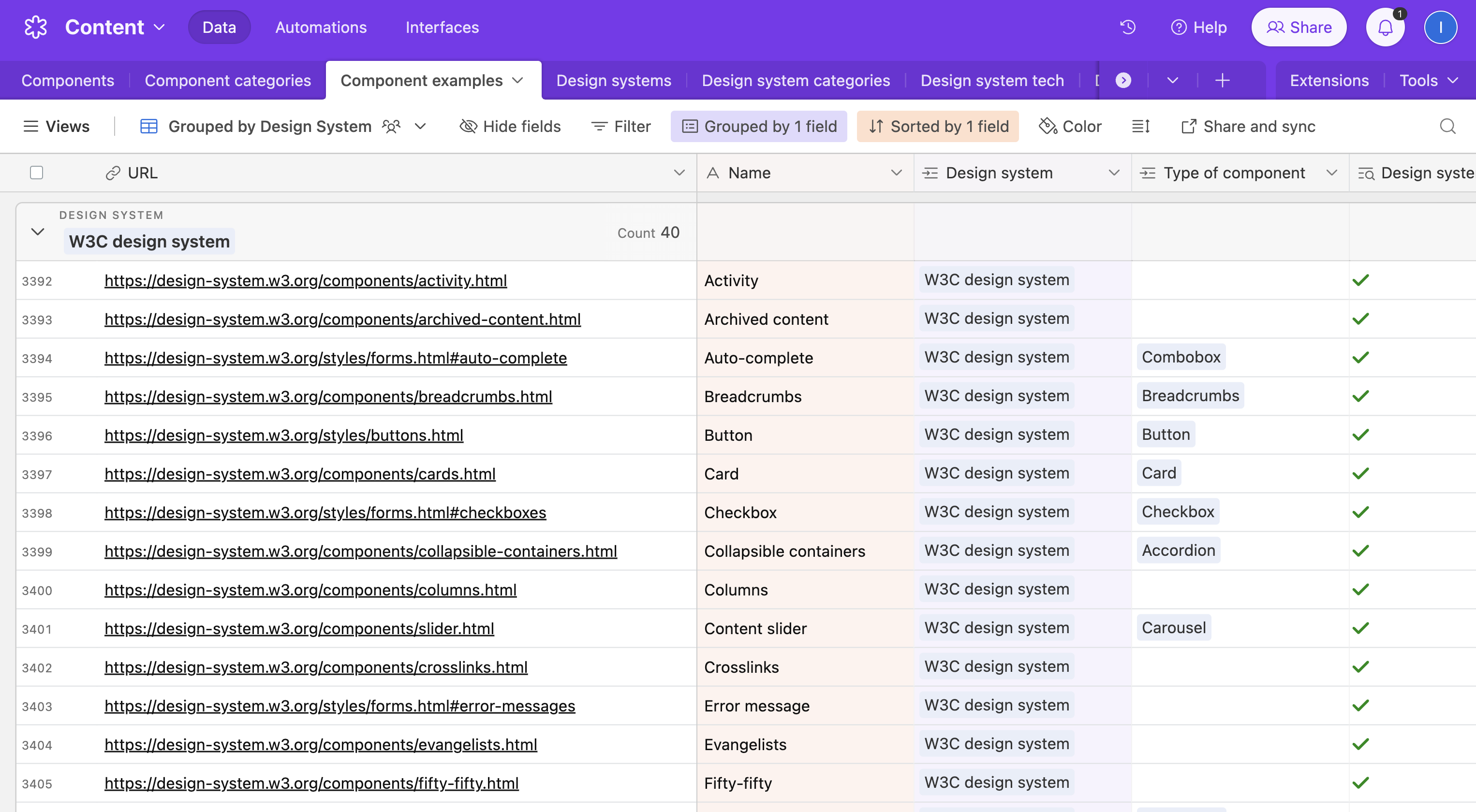

At the time of writing the site includes:

- 57 components

- 94 design systems

- 2,628 component examples

These figures don’t include the many unpublished systems that I haven’t got round to auditing, nor the nearly 1,000 component examples that don’t fit neatly into one of my 57 component-shaped holes. It also excludes the systems that I’ve had to remove because they’re no longer publicly available.

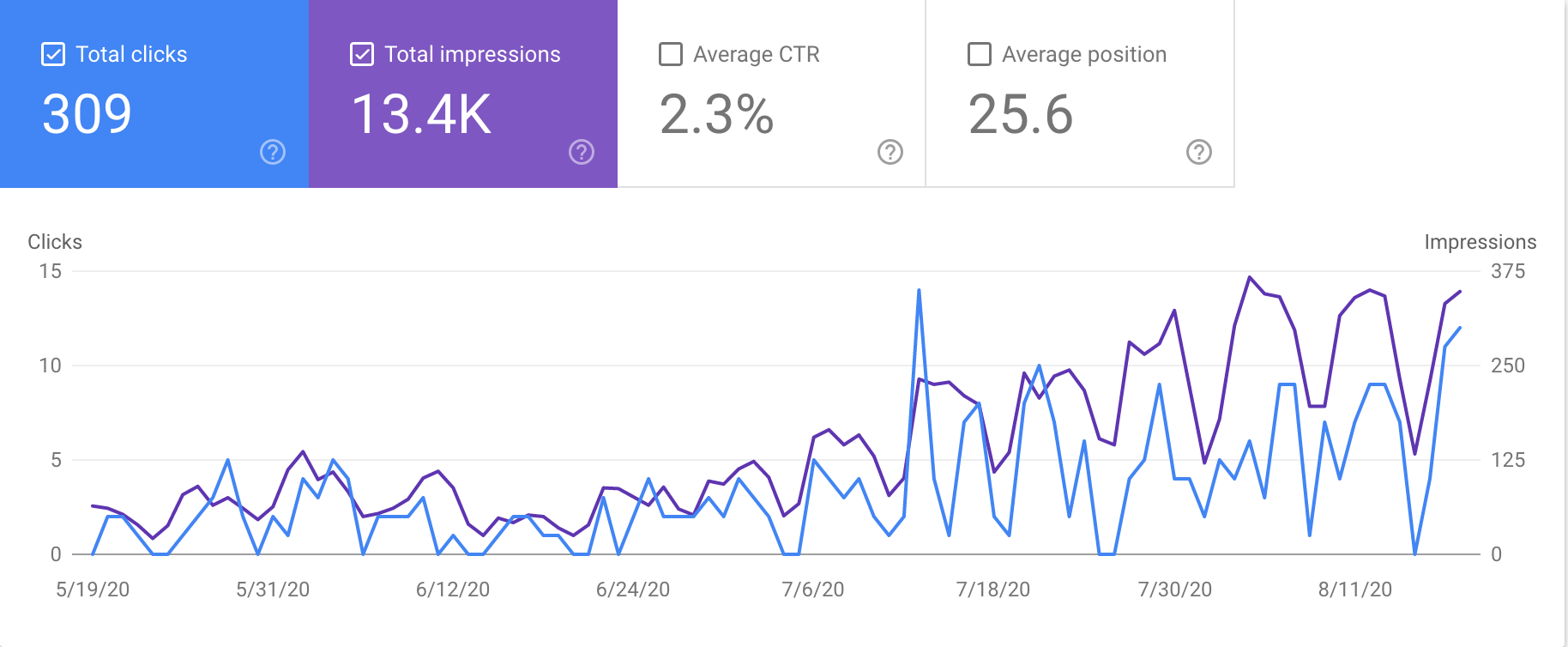

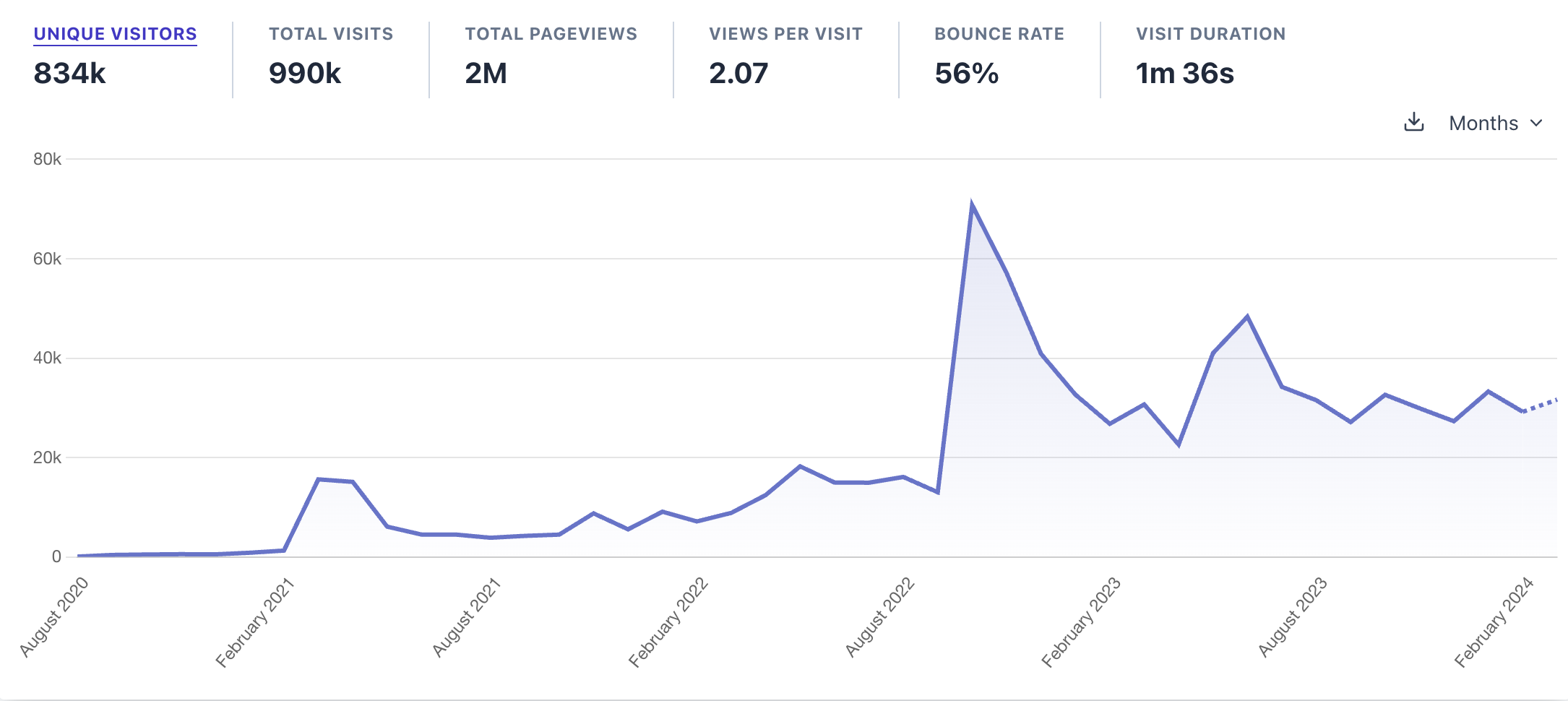

Visitors

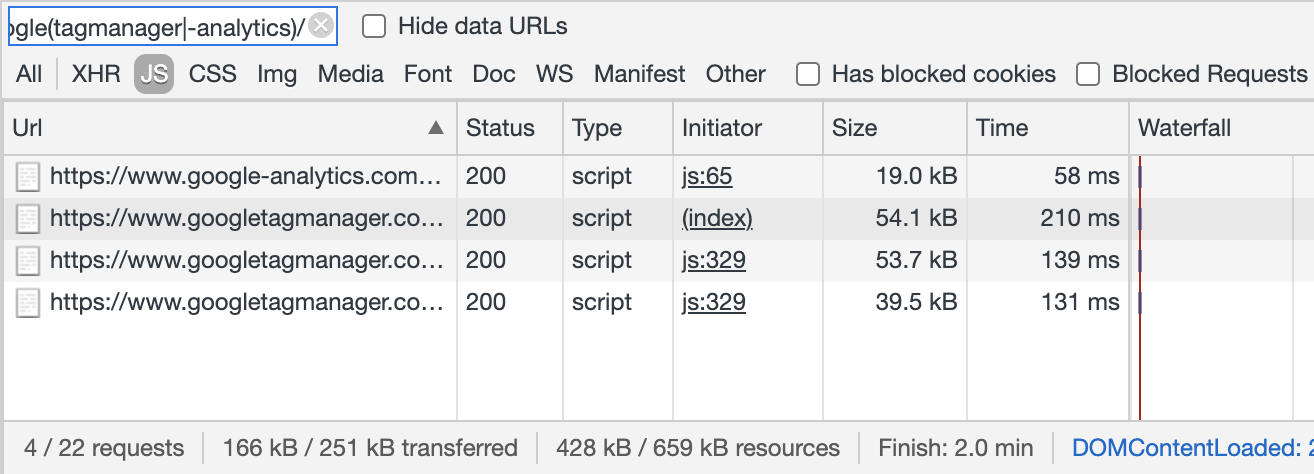

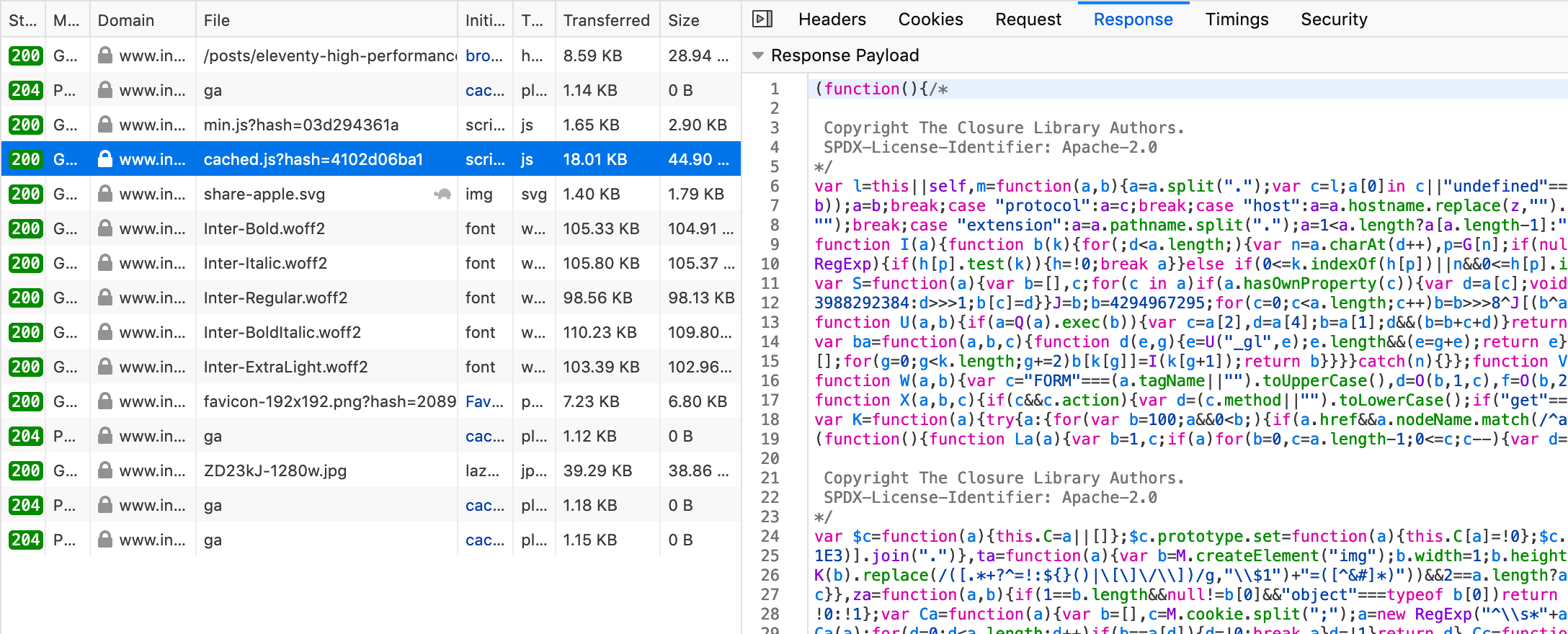

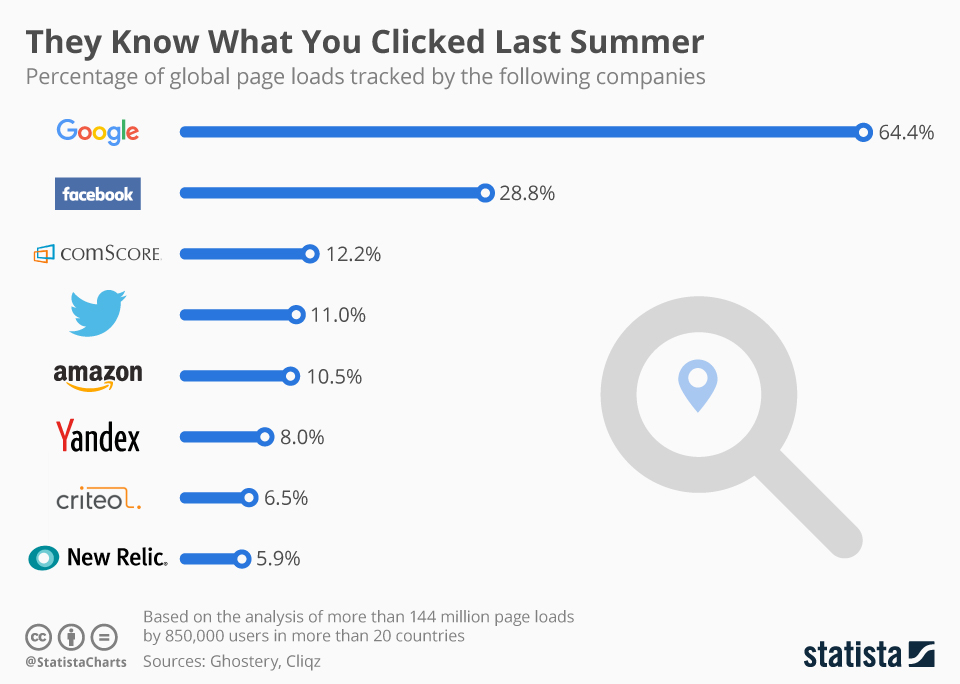

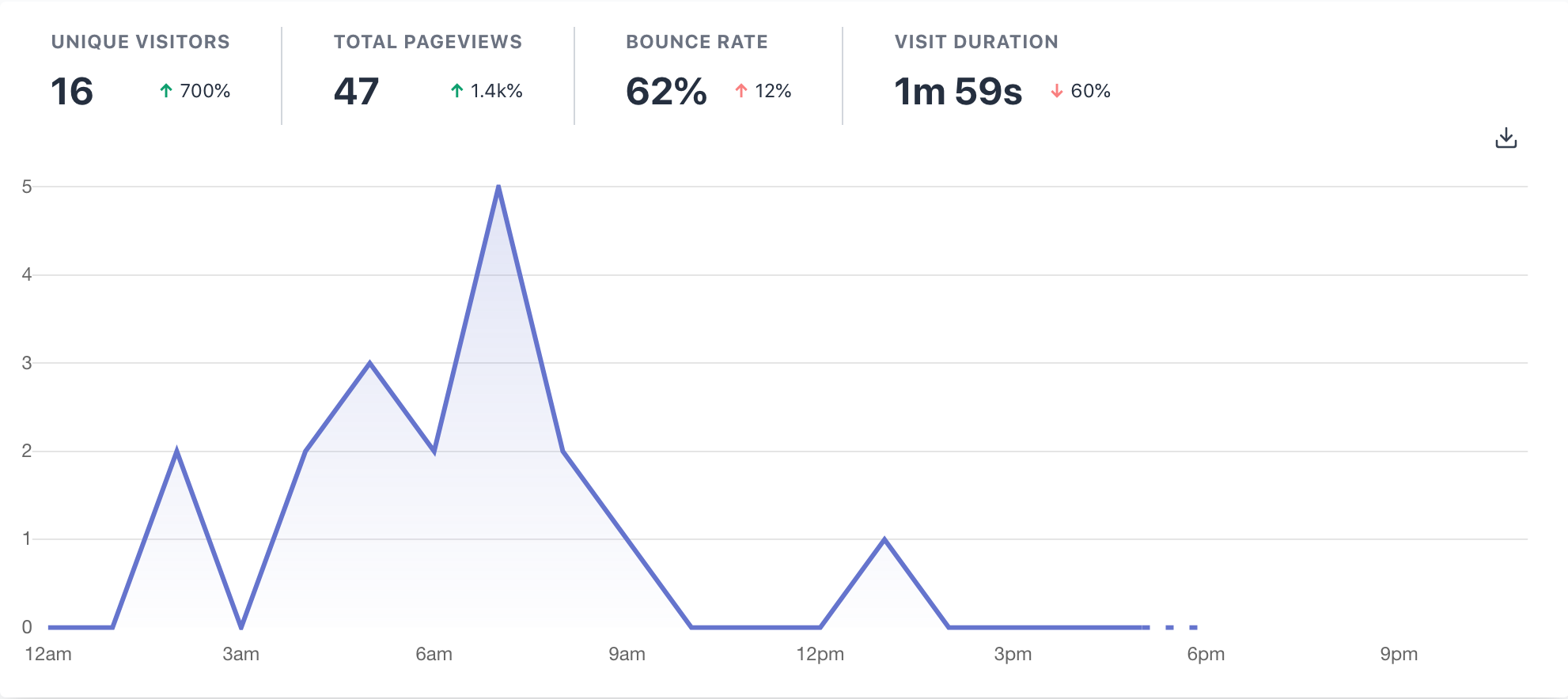

I use Plausible, which like all client-side analytics, can easily be circumvented by blockers. However, it’s good enough to show trends. The spikes generally correspond with popular social media posts but on an average weekday, the site receives one to two thousand visitors.

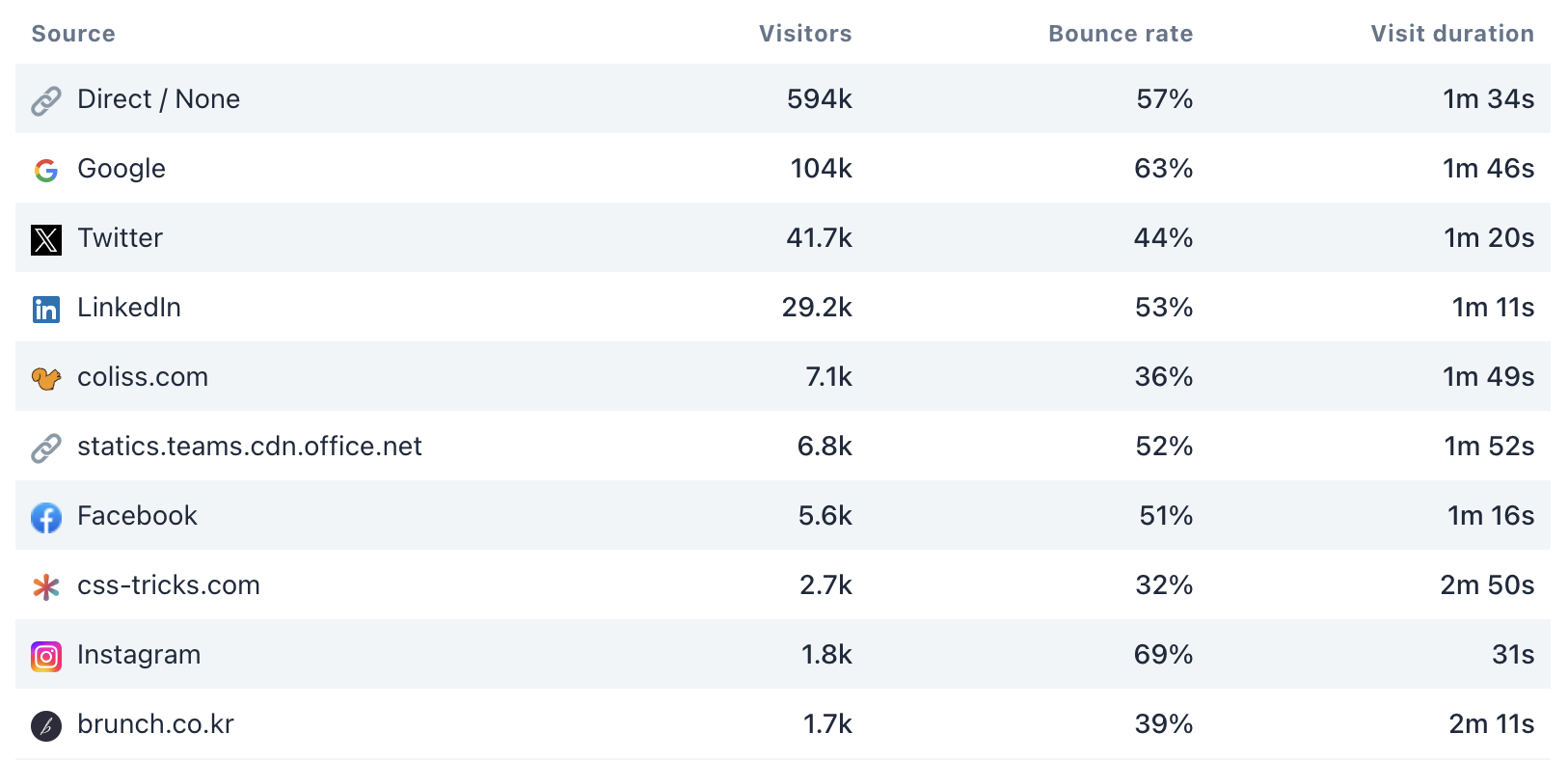

What’s most interesting to me is the referrer data: direct traffic is the biggest driver of visitors with Google in a distant second. In terms of social networks, Twitter is still the largest source, with LinkedIn not far behind. Instagram is a surprising inclusion in the top 10, considering how much Meta discourage external links. The only conclusion I can draw from the high bounce rate and short visit duration is that Instagram users have much shorter attention spans than the average visitor.

The strangest inclusion to me is the statics.teams.cdn.office.net domain which appears to be a Content Delivery Network (CDN) used by Microsoft Teams or other related Microsoft services. As someone who is lucky enough to very rarely use Microsoft’s enterprise software products, I’m a little baffled why it appears so highly.

Costs

The running costs of the site consist of:

- Domain: $24.16/year

- Database: I was initially on the free Airtable plan but I quickly reached the record limit. It now costs me $9/month

- Hosting: There have been months where I’ve approached the limits of Netlify’s free tier but it’s never cost me a penny: $0

- Analytics: I’m not a huge fan of analytics in general but Plausible ’s “privacy-focused” approach is far less creepy than most of its competitors: $12/month

While I would love to get paid for my work on it, implementing ads or a paywall for bonus content feels like more hassle than it’s worth. I already have a full-time job, and maintenance costs are low enough that I’m fine to absorb them. I also worry that introducing monetisation could detract from my enjoyment, and make it start to feel like real work.

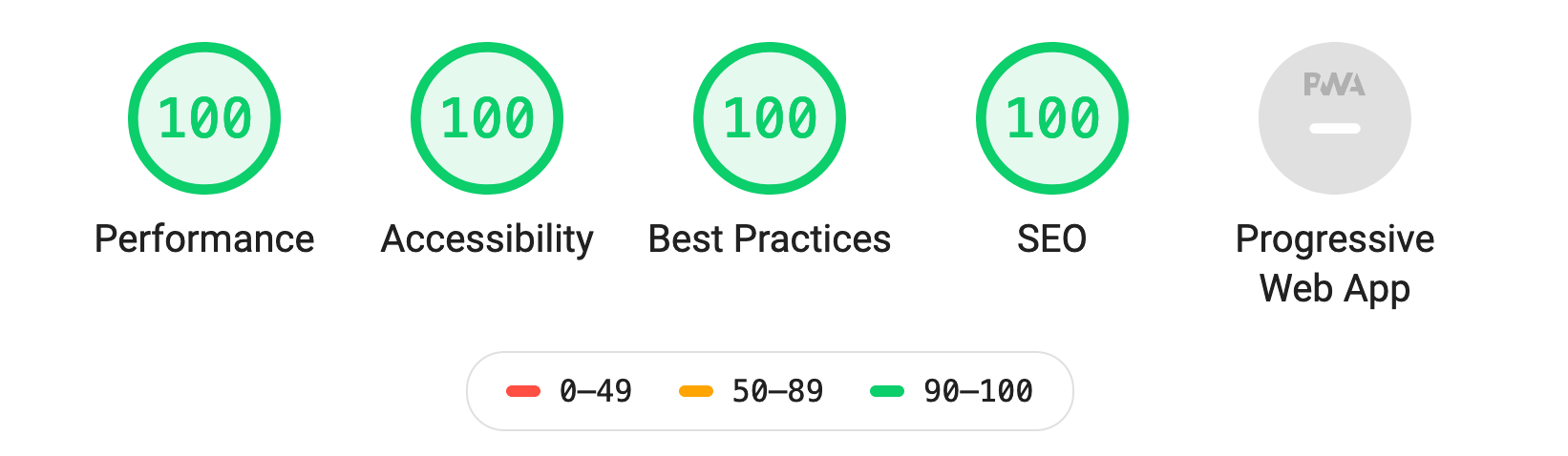

Out with the old…

Since its first iteration, the site has been built using Gatsby. At the time, Gatsby was a great option thanks to its unified data layer: after installing the gatsby-source-airtable plugin and updating a couple of env vars, I was up and running with a GraphQL API for my Airtable database (a feature which Airtable still don’t offer themselves). As time went on I added more features, which meant more plugins, sources, and transformers. At this point, the simplicity which attracted me to Gatsby had gone – updates became a huge chore as some packages got new versions while others fell by the wayside.

Not only was maintaining and updating a Gatsby site becoming harder, it also appeared to be losing favour as a platform for developers. Since purchasing Gatsby in February 2023, Netlify have turned the GatsbyJS.com homepage into a big ad for their services, laid off most of the team working on it[2], and spun out the clever data layer tech into Netlify Connect, a product seemingly targeted squarely at enterprise customers.

…and in with the new

I'm in the early stages of replatforming the website to Astro. This will coincide with a redesign of the site and some new features. The database for the site will continue to use Airtable.

The biggest barrier stopping me from moving away from Gatsby was the lack of migration path for the Airtable GraphQL API. I first looked at StepZen, a service which promised to let me "Declaratively build GraphQL APIs from backend building blocks". They even had a detailed instruction video on connecting up Airtable as a data source. But when trying to follow the instruction video, I found that all references to Airtable had been purged from the UI. Stranger still, certain links were taking me to a product on the IBM website called “IBM API Connect”. It turns out StepZen was purchased by IBM in 2023 and subsequently rebranded, while the original product appears to be neglected.

I’m currently using BaseQL, a service designed specifically for generating a GraphQL API from Airtable bases (or Google Sheets). It works well so far and has clear, reasonable pricing. I’ve got my fingers crossed that it sticks around (and the likes of IBM or Netlify can keep their grubby hands off it).

Upcoming features

The primary reason for replatforming the site is to make adding new features easier. Here are a few I’ve got planned (in no particular order):

A carousel component

Carousels are bad and you shouldn't use them, but that doesn’t stop me from getting frequent requests to add a component page for them. It would be irresponsible of me to add this without also including a few thousand words arguing against their use.

Differentiate between different types of system

Design Systems !== UI Frameworks !== Component Libraries

The current iteration of the site makes no distinction between these, apart from via the ‘Open Source’ feature badge – this is only an indicator that the code is public, not that it’s intended for use outside the original organisation.

Landing pages for design systems

I've always been reluctant to add pages for individual design systems because I've seen them as duplicating the functionality of the system's own site, however existing the cards are getting cluttered, and that’s before I've added more design system feature badges.

Better dark mode

As someone who actually doesn’t mind light mode, I often forget the site even has the option. It isn’t until I see other users sharing screenshots with dark mode enabled that I think, “that doesn't look very good!”. I’m working on a new dark mode colour scheme that's easier on the eyes.

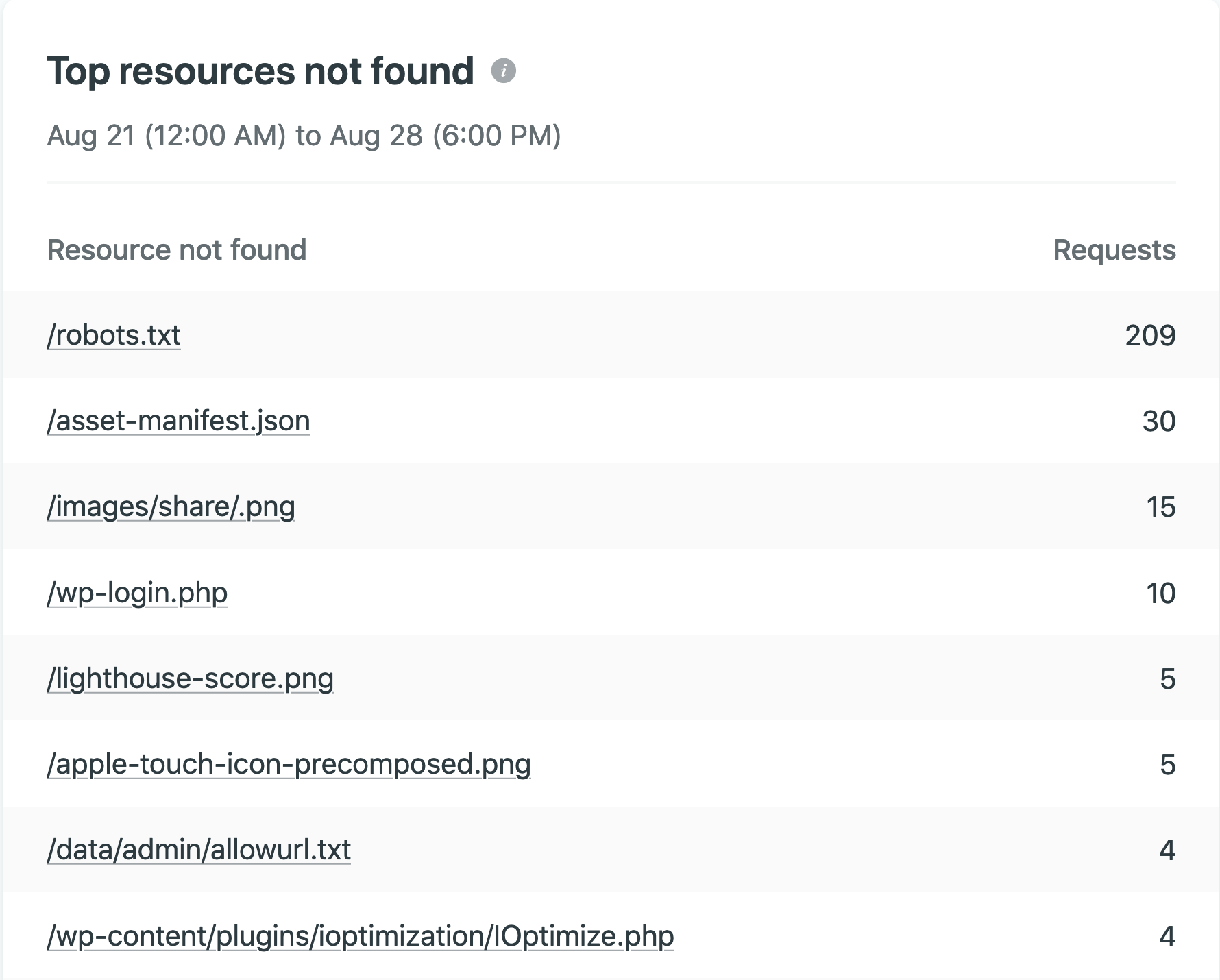

Automated checking for broken links

I want to relieve users (who I'm very grateful to!) of the burden of finding broken links and shift it onto an automated process.

Thanks

Compared with early 2019 when I began compiling examples for the site, there are now many more sources available for researching components.

Here are some that I keep going back to:

- The Design Systems News newsletter has always been a great resource which I’ve found indispensible for discovering new design systems.

- The Storybook component encyclopedia has a great glossary section which includes an image for every single one of its thousands of component examples[3]. It also really helped me when trying to find Storybook links for design systems.

- UI Guideline covers fewer components and systems than The Component Gallery, but it takes a meticulous approach, with in-depth analysis of naming, grouping, and component anatomy between systems. It looks like v2 has locked some content behind a paywall but v1 of the site has loads of great content, available for free.

- I'm primarily a developer, not a designer, so the most useful examples for me are code examples. However, design systems are a lot more than code: Design Systems for Figma is great for exploring the Figma files created for big-name design systems.

Finally, I wanted to thank everyone who has contributed to the website: all the people who’ve submitted suggestions via the contact form (I do read them eventually!); those of you who have opened GitHub Issues; and everyone who has sent kind words or shared links on Twitter[4].

The practice in which tech workers use their own product consistently to see how well it works and where improvements can be made. What Is ‘Dogfooding’? – New York Times ↩︎

Tweet by @lekoarts_de 2023-08-20 ↩︎

This is, unfortunately, only possible thanks to the consistent format of storybook stories. It’s a feature I’d love to include on The Component Gallery but I’ve had to park due to the amount of work required. (See the GitHub Issue, Idea: include preview image of each component #43) ↩︎

Apologies if you’ve tried to contact me on LinkedIn – I probably assumed you were either a recruiter or a Large Language Model. ↩︎